Article

QA Process: Consolidate Testing and Bug Fixes in One Flow

Gaps between QA and developers lead to bugs being caught too late. Learn how to set up QA process test management in Alios with a checklist and bug flow.

QA Process: Consolidate Testing and Bug Fixes in One Flow

QA tested, found a bug, wrote it in Slack. Did the developer see it? Maybe. Did they fix it? Unknown. Did QA test it again? Unclear. Did it go to production? Hopefully it's fixed.

This scenario isn't an exaggeration. QA-developer communication in most startups runs through messaging. Slack messages, emails, sometimes a screenshot sent to WhatsApp. Which bug was fixed, which is still waiting, which was "pushed to the next version" — none of it is trackable.

The result: the same bug gets reported twice, fixed bugs don't get retested, and what's ready before going to production isn't clear.

Where Does the QA-Dev Communication Gap Come From?

There are three root causes of the gap between QA and developers.

Test output isn't structured. The message "there's an issue over here" is technically insufficient. Which environment was it tested in, what steps reproduce it, what's the expected behavior, what actually happened — without these written down, the developer doesn't fully know what to fix.

Bug status is invisible. A bug was reported — then what happened? Did the developer pick it up, add it to their priority queue, fix it, open a PR? When this information is buried in the messaging channel, QA has to keep asking "was this fixed?"

There's no closing loop. The developer says "I fixed it." Does QA know they need to retest? In which environment, which version? When this coordination has to be rebuilt for every bug, there's serious time waste.

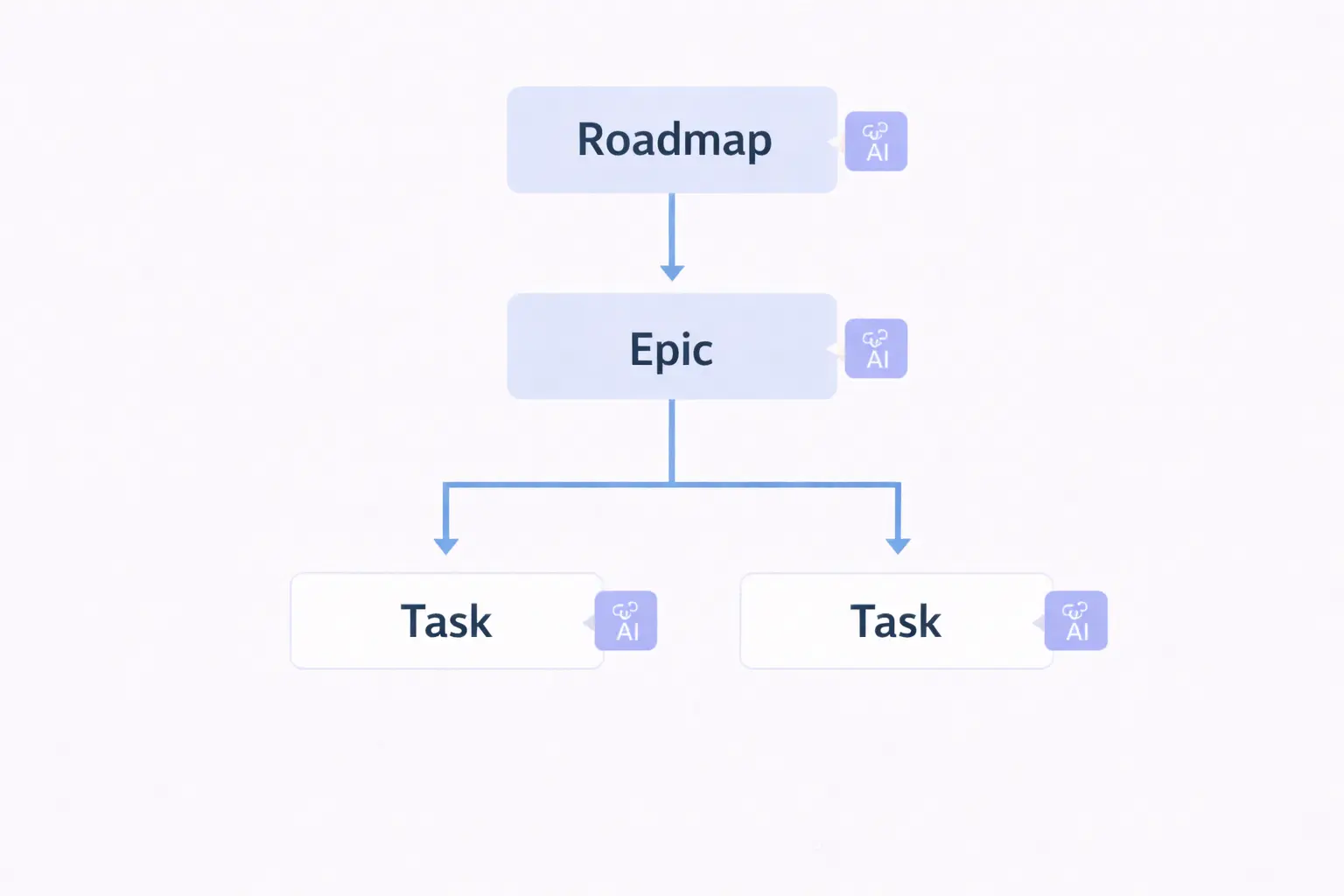

The QA Flow in Alios: A Two-Layer Model

QA process test management in Alios works across two layers: test checklist and bug list. The two are separate node structures but connected to each other.

Layer 1 — Test Checklist Node

A test checklist node is opened for each release or feature. This node is QA's working document.

📁 TEST — [Feature/Release name] — [Date]

Owner: [QA name]

Deadline: [Test completion date]

Environment: [Staging URL or version]

📋 TEST SCENARIOS

Happy Path:

- [ ] [Scenario 1 — step by step]

- [ ] [Scenario 2 — step by step]

- [ ] [Scenario 3 — step by step]

Edge Cases:

- [ ] [Empty input scenario]

- [ ] [Maximum value scenario]

- [ ] [Concurrent operation scenario]

Error States:

- [ ] [Error scenario 1 — expected message/behavior]

- [ ] [Error scenario 2 — expected message/behavior]

Regression:

- [ ] [Existing feature 1 that may be affected]

- [ ] [Existing feature 2 that may be affected]

Device / Browser:

- [ ] Chrome — desktop

- [ ] Safari — desktop

- [ ] Chrome — mobile (Android)

- [ ] Safari — mobile (iOS)

📊 TEST RESULT

Start: [Date]

Completed: [Date]

Total scenarios: [N]

Passed: [N] | Failed: [N] | Blocked: [N]

Overall assessment:

[ ] Ready to go to production

[ ] Critical bug exists — cannot go to production

[ ] Minor bug exists — acceptable / push to next versionLayer 2 — Bug Nodes

Every bug found during testing gets opened as a separate node. It gets linked to the test checklist node.

📌 BUG — [Short description]

Status: Reported

Priority: [Critical / High / Medium / Low]

Owner: [Developer name]

Deadline: [Expected fix date]

Linked test: [Test checklist node name]

🔍 BUG DETAIL

Environment: [Staging / Production — URL — version]

Browser/Device: [Chrome 122 / iPhone 14 etc.]

Account type: [Admin / Regular user / etc.]

Steps to reproduce:

1. [Step 1]

2. [Step 2]

3. [Step 3]

Expected behavior:

[What should have happened]

Actual behavior:

[What happened]

Screenshot / Video: [Link or attachment]

Console error: [Copy if available]

🔧 DEVELOPER NOTE

[What the developer found, how they fixed it, PR link]

PR: [Link]

Fix version: [Branch or tag]

✅ QA VERIFICATION

[ ] Fix tested in staging

[ ] Scenario could not be reproduced again

[ ] Regression check done

[ ] Node closed: [Date]Bug Statuses: Five Stages

A bug node lives in one of five stages:

🔴 Reported → QA found it, developer hasn't picked it up yet

🟡 Under Review → Developer picked it up, analyzing

🔵 Being Fixed → Active development

🟣 Fix Ready → PR merged, waiting for QA test

✅ Verified → QA approved, node closed

⏸ Deferred → No fix in this version, pushed to laterOn each status change, a brief note in the description: "PR #67 opened," "deployed to staging, can be tested," "won't be fixed in this version — reason: out of scope."

These notes mean QA can find the answer to "was this fixed?" without asking — by opening the node.

Closing Criteria

For Bug Closure

A bug node closes when these conditions are met:

✅ Fix deployed to staging

✅ QA could not reproduce the bug with the same steps

✅ Related regression scenarios passed

✅ Developer added PR link to node

✅ QA filled in the verification section and closed the nodeImportant: the bug isn't closed by the developer. QA closes it. This distinction clarifies the difference between "I fixed it" and "it's verified."

For Release Closure

The test checklist node closes when these conditions are met:

✅ All test scenarios run

✅ All critical and high-priority bugs are "Verified"

✅ List and justification of deferred bugs written

✅ "Ready to go to production" approval given

✅ Test summary written into node descriptionThe decision "if no critical bug exists, we can go live" is a criterion the team sets. But this criterion needs to be written down in advance — it shouldn't be debated on release day.

Weekly QA Routine

To keep the QA process sustainable, a weekly routine is enough:

⏱️ MONDAY — Sprint start (15 minutes)

→ Features to test this sprint identified

→ Test checklist node opened for each feature

→ Test scenarios drafted

⏱️ DURING THE WEEK — Testing process

→ Every bug found opened as a separate node

→ Fixed bugs tested when moved to "Fix Ready"

→ Node statuses kept current

⏱️ FRIDAY — Sprint close (20 minutes)

→ Status of open bugs reviewed

→ Deferred bugs documented

→ Test checklist node closed or carried to next sprint

→ Release decision madeFinal Thought

When QA process test management leaves messaging, both QA and the developer benefit. QA doesn't have to ask "was this fixed?" — they look at the bug node. The developer knows which bug is the priority — it's written in the node. The release decision becomes a checklist check, not a debate.

Using test checklists and bug nodes together in Alios builds this visibility. The "there's an issue over here" Slack message becomes a structured node. Closing criteria eliminate ambiguity.

Less "was this fixed?", less retesting, more reliable releases.