Article

AI PR Review Bottleneck: Managing with SLA in Alios

AI generates code faster but review queues don't keep up. Learn how to manage the AI PR review bottleneck with SLA tracking and WAITING status in Alios.

AI PR Review Bottleneck: Managing with SLA in Alios

AI writes code fast. The developer opens a PR. Then waits.

In traditional development, the ratio between writing and reviewing was roughly balanced — writing a feature took roughly as long as reviewing it. With AI, this ratio broke. Writing time halved or quartered. Review time didn't change.

The result: PR queues grow faster than they get cleared. Three developers each using AI to write features at twice the speed means six PRs arriving in the time the team could previously review three. The bottleneck moved from writing to reviewing.

How AI Changes the Review Equation

The PR review bottleneck in AI-assisted teams has a different character than in traditional teams.

Volume increases sharply. More code gets written in the same time. Each piece needs review. Reviewers face a backlog they didn't face before — not because they're working slower, but because input volume increased.

Context density increases. AI-generated code tends to produce larger, more comprehensive changesets. The reviewer needs to understand not just whether the code works, but whether the approach is correct, whether the AI made sound architectural choices, and whether anything got generated that looks correct but behaves unexpectedly at the edges.

Review quality can erode under pressure. When the review queue is long and there's implicit pressure to clear it, reviewers approve faster and less thoroughly. This defeats the purpose — AI-generated code needs at minimum the same rigor of review as hand-written code, and arguably more.

The WAITING gap widens. Developers finish PRs faster and spend proportionally more time waiting. That wait — untracked — becomes invisible lost time. The developer context-switches to something else, then needs to context-switch back when review finally arrives.

The SLA Framework for AI PR Review

Managing AI PR review with an SLA isn't about creating bureaucracy. It's about making implicit expectations explicit so everyone operates from the same understanding.

The SLA has two components: review start time (how quickly a reviewer picks up the PR) and review completion time (how long the full review takes once started).

📋 AI PR REVIEW SLA

Critical (hotfix, production bug):

→ Review start: within 1 hour

→ Review complete: same day

→ Note: reduced review scope acceptable —

focus on correctness and safety

High priority (active sprint task, AI-generated):

→ Review start: within 4 hours

→ Review complete: same day or next morning

→ Note: full review including edge cases and

integration check required

Normal (feature, AI-generated module):

→ Review start: within 24 hours

→ Review complete: 48 hours

→ Note: complete review including AI connection

analysis (what prompt produced this, does

the approach hold)

Small (documentation, minor fix):

→ Review start: within 48 hours

→ Review complete: 72 hours

→ Note: standard review, no special AI check neededThis SLA lives as a node in Alios — not in a Slack message that gets buried, not in a doc nobody reads. When a PR is opened and the task node moves to WAITING, the reviewer and deadline get assigned according to the SLA immediately.

WAITING + SLA: How They Work Together

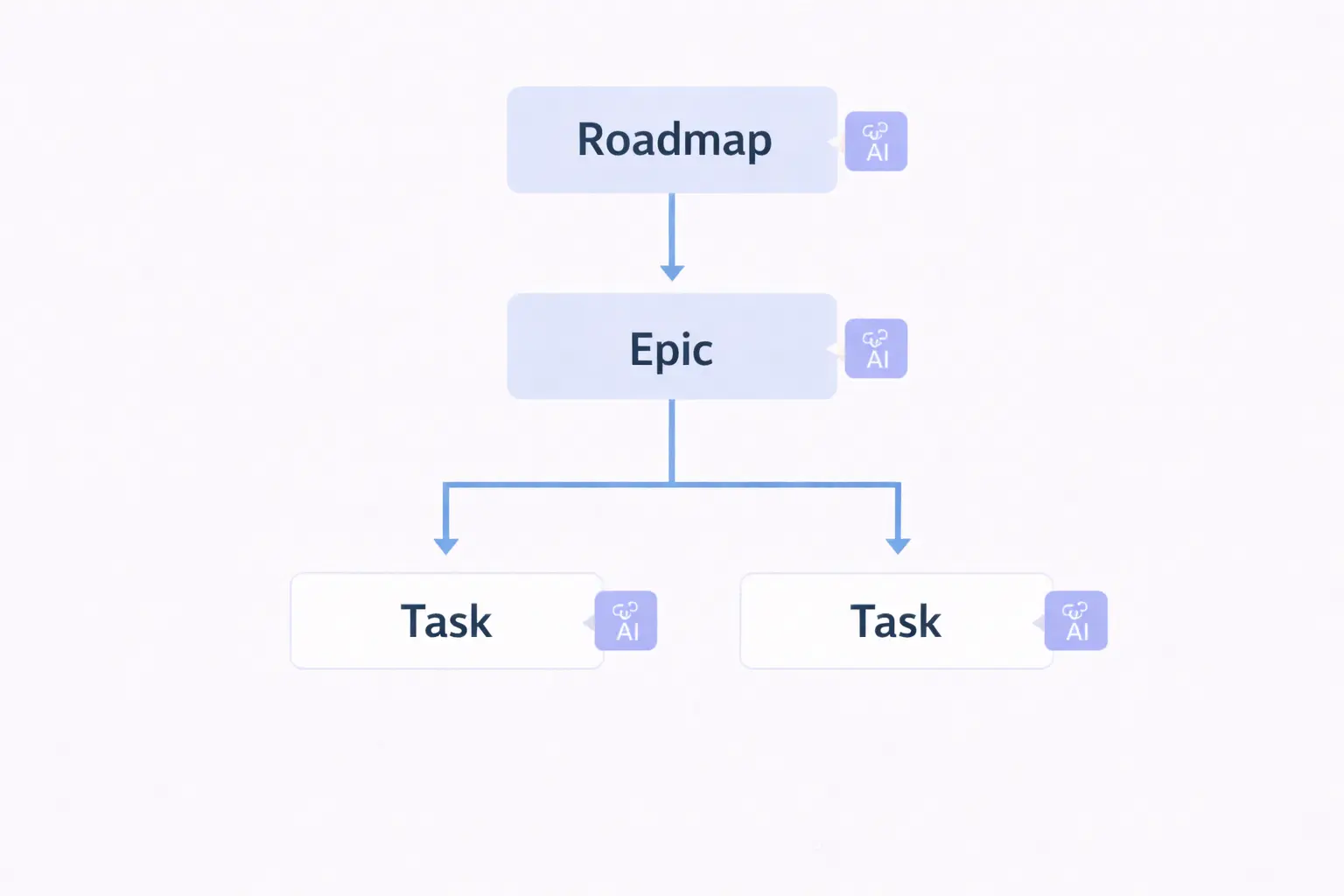

When a developer opens a PR, the task node moves from IN PROGRESS to WAITING with this information:

⏳ WAITING — PR OPENED [Date / Time]

PR link: [GitHub / GitLab URL]

Branch: [Branch name]

Reviewer: [Assigned person]

Priority: [Critical / High / Normal / Small]

Review deadline: [Calculated per SLA]

AI summary:

What AI produced: [1-2 sentences — which

tool, what prompt, what was generated]

What needs human judgment: [Where the

reviewer should focus — edge cases,

architectural choice, integration risk]

Changes in scope:

[What changed, what was added, what was removed]

Test coverage: [What was tested before opening]

Reviewer notes needed on:

[Any specific area where the reviewer's

attention is specifically requested]The "AI summary" section is what differentiates this from a standard PR review node. The developer who opened the PR — who has full context on what the AI produced and why — writes a handoff note for the reviewer. This reduces the reviewer's ramp-up time and focuses their attention where it matters.

Reviewer's AI-Aware Review Checklist

When a reviewer picks up an AI-generated PR, the review isn't just "does this code work?" It's also "did the AI make good decisions here?"

👀 AI PR REVIEW CHECKLIST

Code correctness:

- [ ] Logic is correct for the stated purpose

- [ ] Edge cases are handled

- [ ] Error handling is appropriate

- [ ] No obvious security issues

AI-specific checks:

- [ ] The approach AI chose is the right approach

for this codebase (not just generically correct)

- [ ] No hallucinated method calls or APIs

- [ ] No subtle logic errors that look correct

but fail on edge inputs

- [ ] Context the AI didn't have: are there

project-specific patterns this code violates?

Integration check:

- [ ] Plays well with existing modules

- [ ] No unexpected side effects on adjacent code

- [ ] Database/API interactions are correct

Test coverage:

- [ ] Tests exist for the happy path

- [ ] At least one edge case is tested

- [ ] If AI generated the tests: are the tests

actually testing the right things?

Review decision:

[ ] Approved — merge ready

[ ] Approved with minor comments — merge after fix

[ ] Changes requested — [specific items]

[ ] Needs discussion — [what needs alignment]The "AI-specific checks" section is the addition that makes this checklist different. Standard PR review tools don't prompt reviewers to think about whether the AI's architectural choice was appropriate for the specific project context. This section does.

When SLA Is Exceeded: Escalation Protocol

When a review deadline passes without action, the WAITING node makes this visible immediately — the deadline is written in the node, and any overdue WAITING items surface in the Monday and Wednesday risk scans.

The escalation protocol is simple:

SLA EXCEEDED — ESCALATION STEPS

Same day as deadline:

→ Reviewer gets a direct message — not a Slack

channel ping, a direct message

→ Message: "PR [name] review deadline passed,

can you pick it up today?"

Next morning (deadline + 1 day):

→ Team lead reassigns if reviewer is blocked

→ Alternative reviewer identified

→ WAITING node updated with new reviewer and deadline

Deadline + 2 days:

→ PR review treated as sprint blocker

→ Brought to next standup or async check-in

→ If still no resolution: split the PR or

reduce scope to unblock merge

Log the pattern:

→ If the same reviewer's SLA is exceeded

repeatedly, this is a capacity signal,

not a performance issue — workload needs

to be rebalancedLogging the pattern is important. A review queue that consistently backs up isn't a motivation problem — it's a structural problem. If one person is the review bottleneck, the solution is distributing review capacity, not asking that person to work faster.

Monday PR Review Scan

Every Monday morning, a 10-minute scan of all WAITING nodes in review status:

⏱️ 10 MINUTES — MONDAY PR SCAN

[ ] List all nodes in WAITING (PR opened status)

→ How long has each been waiting?

→ Is any SLA already exceeded?

[ ] List all nodes in REVIEW (reviewer active)

→ Any reviewer working on the same PR

for more than 24 hours?

→ Do they need help or a second reviewer?

[ ] List Fix Requested nodes older than 48 hours

→ Has the developer not addressed comments?

→ Is there a blocker preventing the fix?

[ ] Identify PRs that must merge this week

→ Do their review timelines fit the sprint goal?

→ If not: who moves what to make it fit?Final Thought

AI changes the input volume to the review queue without changing the review queue's capacity. Left unmanaged, this gap produces the same outcome every sprint: PRs pile up, reviews happen under pressure, quality erodes, or developers wait too long to maintain context.

The SLA framework, WAITING status, and AI-aware review checklist in Alios don't solve the fundamental capacity question — that requires either more reviewers or more review time. But they make the problem visible in real time rather than after the sprint closes, which is when something can still be done about it.

AI suggests, the developer opens the PR, the SLA tells the reviewer when and how. The queue doesn't disappear — but it doesn't pile up silently either.