Article

AI Code Quality Management: Test Checklist and Bug Flow

AI accelerates code production but doesn't remove the need for testing. Learn how to build AI code quality management in Alios with a test checklist and bug flow.

AI Code Quality Management: Test Checklist and Bug Flow

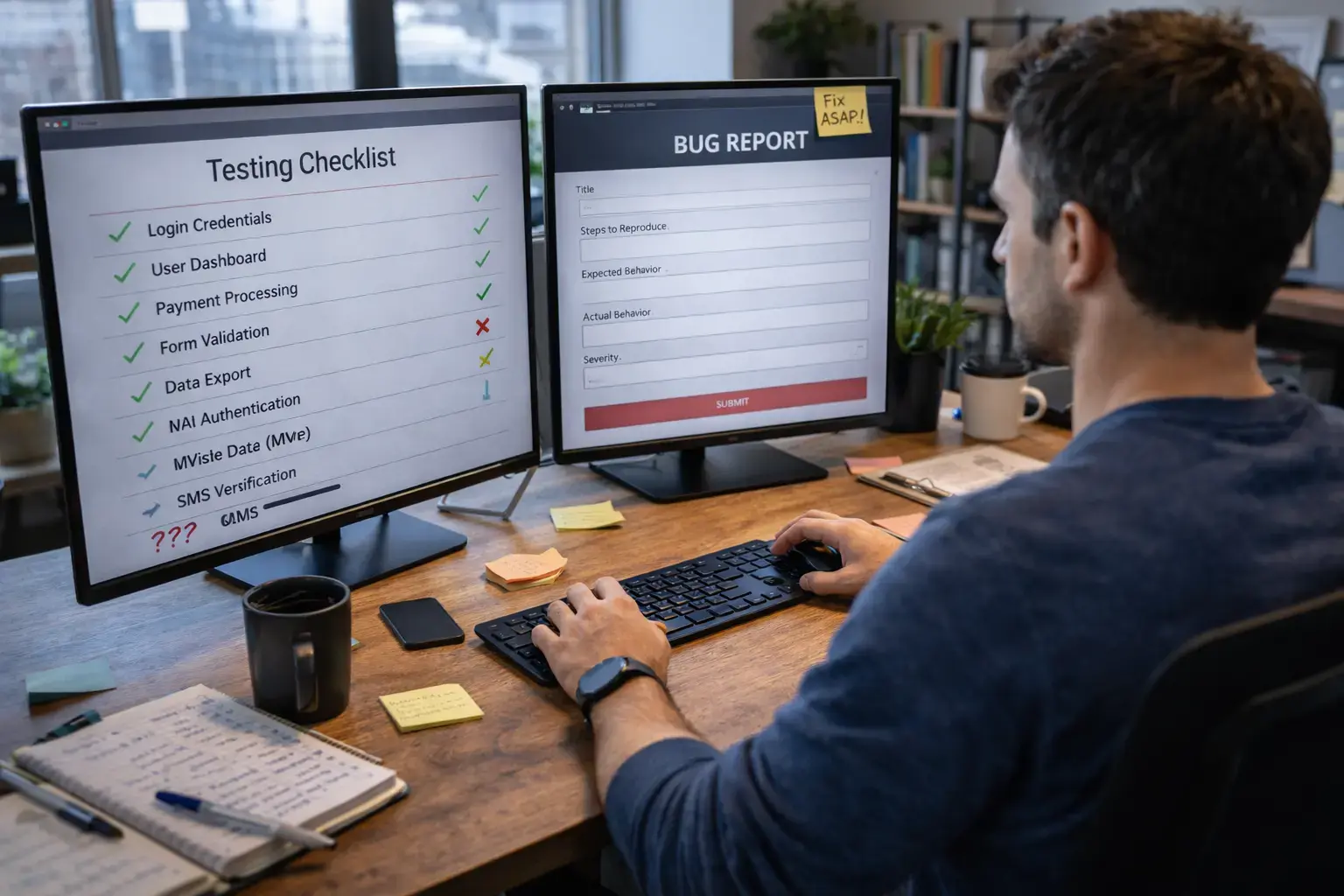

When code production accelerates with AI tools, a paradox emerges: writing time shortened, but the need for testing didn't decrease. It increased.

The reason is simple. AI produces code that satisfies the given prompt. But how that code handles edge cases, how it integrates with the existing system, how it will behave in production — testing all of this is still human work. AI writes code without asking these questions. The developer who skips testing moves forward with the assumption that "AI wrote it, it works."

This assumption breaks in production.

Why AI Code Requires More Testing

In traditional development, as the developer writes code line by line, a mental testing process also runs. "What happens in this condition, what gets returned in that case?" gets asked while the code is being written. This process is slow but internalizes quality.

AI skips this mental process. The developer writes the prompt, receives the output, integrates it. The mental testing process that would have intervened doesn't happen. When this gap isn't filled with a systematic testing process, risks stay invisible.

Context knowledge is missing. AI doesn't know the full context of the project. The code it produces may be generally correct but may not reflect project-specific edge cases, business rules, or the nuances of the existing system.

Edge case coverage is weak. AI writes the happy path well. It generally leaves empty input, null values, network errors, and concurrent request scenarios in the background — unless asked.

Integration blindness. AI-produced code may work on its own but can't be known without testing integration with existing modules. Integration issues surface at the latest in production.

Confidence fallacy. The perception that "AI wrote it, fewer errors" loosens the testing process. But the error rate of AI-produced code isn't lower than traditional code — it just contains different types of errors.

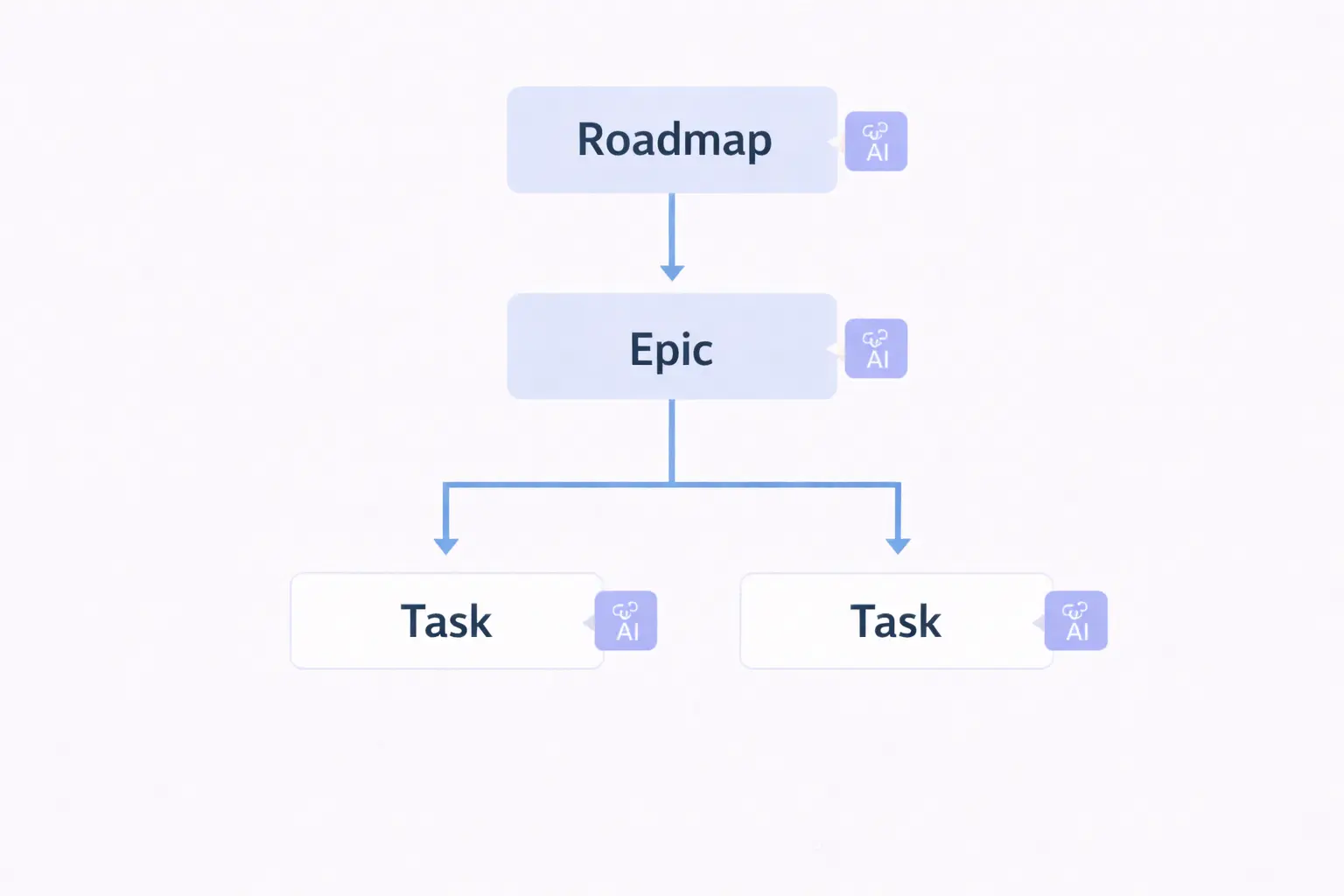

Quality Management Structure in Alios

AI code quality management in Alios works across three layers: test checklist nodes, bug nodes, and a four-stage status flow.

When this structure is set up for every AI-produced feature or module, the testing process becomes visible, trackable, and repeatable.

Four-Stage Status Flow

Every test and bug node lives in one of four statuses:

⬜ NOT_STARTED → not yet begun

🔄 IN_PROGRESS → actively being worked on

👀 REVIEW → complete, awaiting approval

✅ DONE → approved, closedThese four statuses apply to both test checklists and bug nodes. The system is consistent, learning cost is zero.

Test Checklist Node Template

A test checklist node gets opened for every significant piece of AI-produced code. This node isn't a task — it's a quality gate.

📌 TEST CHECKLIST — [Feature / Module Name]

Status: NOT_STARTED

AI Tool: [Claude / Cursor / Copilot / etc.]

Developer: [Name]

Tester: [Name — if different]

Deadline: [Test completion date]

Linked Node: [Related task or PR]

─────────────────────────────

🤖 AI CODE SUMMARY

What was produced:

[1-2 sentences — what AI wrote, with which prompt]

Context AI didn't have:

[Project-specific business rules, constraints, or

edge cases not given to AI]

Risk level:

[ ] Low — isolated, independent module

[ ] Medium — integration with existing system

[ ] High — affects critical business flow

─────────────────────────────

✅ FUNCTIONAL TEST

Happy Path:

- [ ] Core scenario working — [Description]

- [ ] Expected output verified

- [ ] UI/UX matches expectation (if applicable)

Edge Cases:

- [ ] Empty input / null value handling

- [ ] Maximum value / character limit

- [ ] Invalid format input

- [ ] Concurrent request / race condition

- [ ] [Project-specific edge case 1]

- [ ] [Project-specific edge case 2]

Error Handling:

- [ ] Network error — correct message shown

- [ ] Timeout — user informed

- [ ] Auth error — correct redirect

- [ ] Unexpected error — graceful degradation

─────────────────────────────

🔗 INTEGRATION TEST

- [ ] No conflict with existing modules

- [ ] Database operations working correctly

- [ ] API response format compatible

- [ ] Auth / Permission checks working

- [ ] [Project-specific integration 1]

─────────────────────────────

⚡ PERFORMANCE TEST

- [ ] Response time acceptable under normal load

- [ ] No N+1 query problem

- [ ] No signs of memory leak

- [ ] [Project-specific performance criterion]

─────────────────────────────

🔒 SECURITY CHECK

- [ ] Input sanitization in place

- [ ] No SQL injection / XSS risk

- [ ] Sensitive data not written to logs

- [ ] Permission check cannot be bypassed

─────────────────────────────

📋 TEST RESULT

Overall result:

[ ] All tests passed — ready for REVIEW

[ ] Bug found — bug nodes opened

[ ] Out-of-scope issue detected — noted

Test note:

[Notable behavior, finding, or observation from AI

code worth referencing in the future]

Bugs opened: [N]

Bug nodes: [List]Checklist Usage Rhythm

Each test checklist node progresses in this sequence:

Developer opens PR → Test checklist moves from

NOT_STARTED to IN_PROGRESS → Tests are run →

If bugs found, separate nodes are opened →

When all items complete, moves to REVIEW →

Tester or lead approves → DONE → PR can be mergedTest checklist being DONE is required for PR merge. This rule makes the system itself ask "was it tested?" instead of requiring someone to remember to ask.

Bug Node Template

Every bug found during testing gets opened as a separate node. It doesn't get noted in the test checklist — it gets tracked separately.

📌 BUG — [Number]: [Short, specific title]

Status: NOT_STARTED

Priority: [ ] Critical [ ] High [ ] Medium [ ] Low

AI Generated Code: [ ] Yes [ ] Partially [ ] No

Found by: [Name] — Date: [Date]

Assigned to: [Developer]

Deadline: [Expected fix date]

Linked Checklist: [Test checklist node name]

🔍 ENVIRONMENT

Platform: [Web / iOS / Android]

Browser: [Chrome 122 / Safari 17 / etc.]

Version / Branch: [Which build]

Account type: [Admin / User / Trial]

URL: [Page where bug appeared]

🔁 REPRODUCTION STEPS

1. [First step — specific]

2. [Second step]

3. [Third step]

4. [Step that triggers the bug]

Reproduction frequency:

[ ] Always reproduces

[ ] Sometimes — approximately [N]%

[ ] Observed once

✅ EXPECTED BEHAVIOR

[What should have happened — clear and specific]

❌ ACTUAL BEHAVIOR

[What happened — screenshot or video attached if available]

📋 LOG / ERROR OUTPUT

Console error:

[Copied error message — if available]

Network request:

[Relevant API call and response — if available]

Server log:

[Relevant log lines — if available]

🤖 AI CONNECTION ANALYSIS

Does this bug originate from AI-generated code?

[ ] Yes — AI didn't handle the edge case

[ ] Yes — AI didn't have project-specific context

[ ] Partially — AI code was correct, integration issue

[ ] No — not related to AI

What should have been added to the AI prompt?

[What context or constraint would have prevented

this bug — learning note for future prompts]

✅ ACCEPTANCE CRITERIA

Fix is considered complete when:

- [ ] Reproduction steps repeated — bug not reproducible

- [ ] Expected behavior is happening

- [ ] Also works correctly in [edge case] condition

- [ ] Regression: [Potentially affected feature] still works

- [ ] No new errors in logs

🔧 DEVELOPER NOTE (after fix)

Root cause: [What was causing what]

Solution: [What was changed]

PR: [Link]

AI prompt updated: [ ] Yes [ ] No [ ] N/AWhy the AI Connection Analysis Matters

The "AI Connection Analysis" section isn't found in standard bug templates. But it's critical in AI-assisted development.

When the question "what should have been told to AI?" is asked for every bug, two things happen: the team learns to use AI better, and the same bug category doesn't get produced again. This section isn't an error record — it's a learning record.

Status Flow: From Test to DONE

Here's how test checklists and bug nodes work together:

📁 FEATURE: User Invitation System

│

├── 📌 TEST CHECKLIST — Invitation System

│ Status: IN_PROGRESS → REVIEW → DONE

│ Tester: Zeynep

│ Bugs found: 2

│

├── 📌 BUG-001: Invalid email format is accepted

│ Status: NOT_STARTED → IN_PROGRESS → REVIEW → DONE

│ Priority: High

│ AI connection: Yes — validation not specified in prompt

│

└── 📌 BUG-002: Invite link still active after 24 hours

Status: NOT_STARTED → IN_PROGRESS → DONE

Priority: Medium

AI connection: Partially — expiry logic missingFor a feature to be considered DONE: test checklist is DONE, all Critical and High priority bug nodes are DONE. Medium and Low bugs can go to backlog but stay on record.

Sprint-Level Quality Tracking

At the end of each sprint, this summary comes out of the test and bug nodes:

📊 SPRINT [N] QUALITY SUMMARY

AI-generated code ratio: [N]%

Test checklists:

- Opened: [N]

- Completed: [N]

- Carrying over: [N]

Bug summary:

- Total found: [N]

- Critical: [N] → Closed: [N]

- High: [N] → Closed: [N]

- Medium + Low backlog: [N]

AI-connected bug rate: [N]%

Learning note:

[Which error category appeared most in AI code this sprint]

[What will be added to AI prompts next sprint]When this summary is compared two sprints later, the impact of AI usage on quality becomes concrete. If the "AI-connected bug rate" is dropping, the team is learning to use AI better.

Final Thought

AI increases code writing speed. It doesn't eliminate testing responsibility.

Test checklist nodes and bug nodes in Alios make this responsibility visible and trackable. Every AI-produced code passes through a quality gate. Every bug becomes a learning opportunity. Quality doesn't stay tied to individuals — it enters the system.

The assumption "AI wrote it, it works" gets replaced by the reality "AI wrote it, we tested it, it works."